Most financial institutions have AI governance in place.

There are checklists. Model cards. Deployment gates. Approval workflows. Compliance sign-offs. The frameworks exist, the teams are trained, and the documentation is filed correctly.

And yet something is still quietly breaking — not in a way that triggers an alert, not in a way that surfaces in a quarterly report. It breaks the way a clock drifts: imperceptibly, incrementally, until one day the time is wrong and nobody can say exactly when it stopped being right.

This is the real AI governance problem in financial services today.

We have solved governance for systems that stay still. Nobody has solved it for systems that change without you.

Everyone on high-performing fintech teams has governance covered. It is in the algorithm review, the model card, the deployment checklist. That is not the gap anymore.

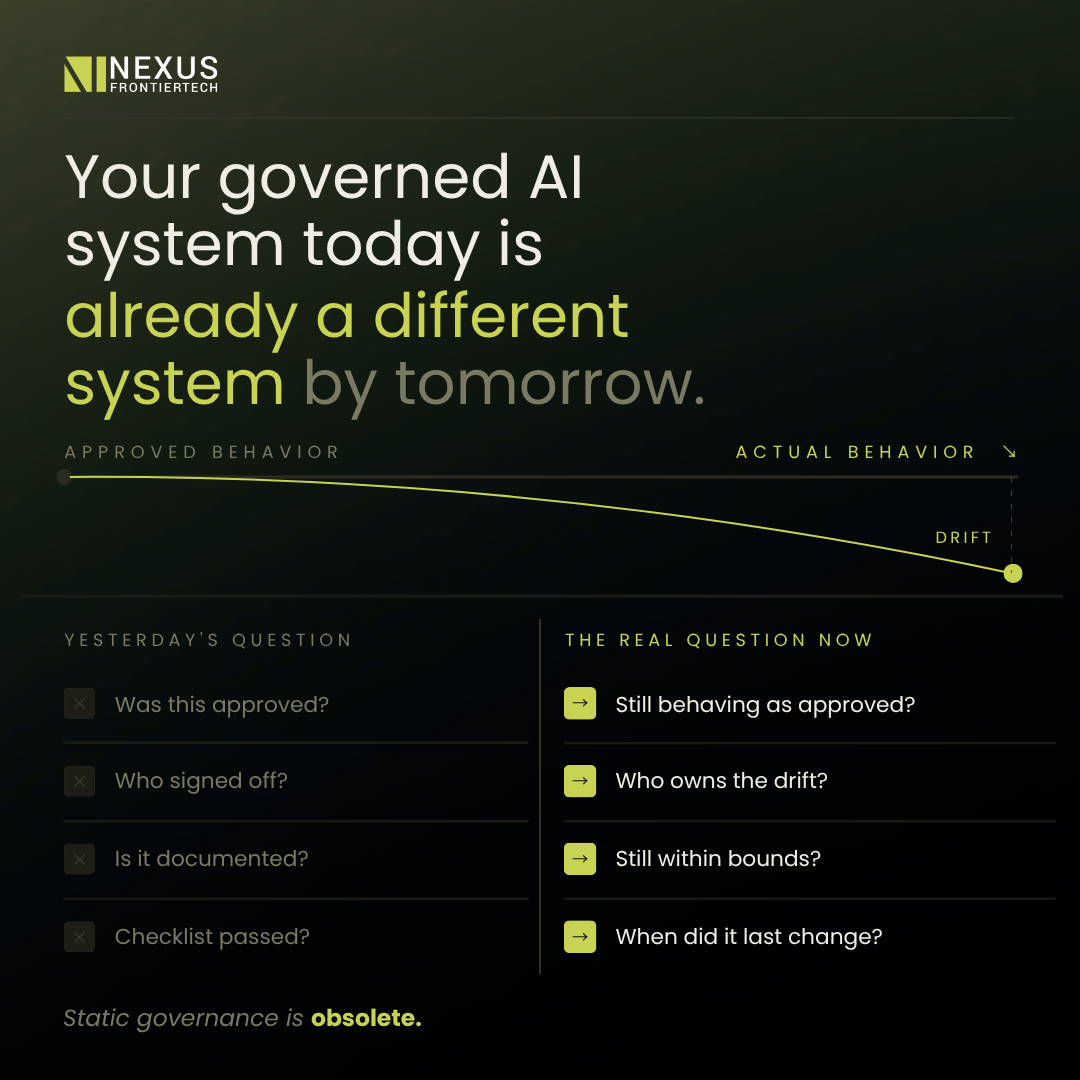

The gap is this: Your governed AI system today is already a different system by tomorrow.

Not because your team made an error. Not because a deployment slipped through. The system changed around you — and your static governance framework had no way to be on top of it.

The causes are rarely dramatic. They accumulate quietly:

The foundation model your AI tooling sits on was updated by its vendor — no announcement, no change record in your system. Market microstructure shifted and your credit signal drifted outside its calibrated range. Forty new edge cases walked through the door that your approved logic has never encountered. A data provider changed a field format upstream.

None of these events triggered a review. None appeared in a model card. And yet your system is now behaving differently from the system your compliance team approved.

Traditional governance was designed for a world where you were the only agent making changes. In 2026, you are not.

And because of that, your framework is asking the wrong questions.

Most governance frameworks answer the foundational questions well:

The left column is necessary. The right column is where the risk actually lives – and most frameworks have no answer for it.

The institutions building durable AI governance programs are not building larger committees. They are treating behavioral observability as a first-class engineering concern — the same way Site Reliability Engineering (SRE) team treats uptime.

Concretely, that means three things:

Behavioral baselines, not just approval gates. Capture what approved behavior actually looks like in production — outputs, distributions, decision patterns — so drift is detectable, not just arguable after the fact. The approved behavior is not a document. It is a measurable reference point.

Provenance at the decision level. Not just “which model was used” but “which version, which context window, which prompt state produced this credit decision on this date.” Git blame for AI reasoning. When a regulator asks why a particular output was produced, the answer is available immediately, with a complete audit trail.

This is the standard Nexus FrontierTech‘s clients are already operating at. At one top-tier global bank, implementing this level of provenance reduced financial statement processing time by 80% — with full audit trail and regulatory sign-off from day one.

Governance SLAs, not governance ceremonies. Define how long an unreviewed behavioral shift is acceptable before it triggers escalation. Not a quarterly review — an incident threshold. The same way an engineering team defines an acceptable error rate before paging someone. If behavior outside approved bounds persists beyond that window without review, it is treated as an incident.

This shifts governance from periodic audits to continuous, observable accountability.

The vibe coding era did not make governance irrelevant. It made static governance obsolete.

The next competitive moat in financial services is not who can build the fastest AI systems. It is who can detect when their fast-built systems have quietly become something else.

AI governance drift is not primarily a policy problem. It is an architecture problem. If your AI system does not separate the layer that reasons and plans from the layer that executes and outputs, governance is reactive by design – you are always reviewing what already happened, not enforcing what is allowed to happen.

At Nexus FrontierTech, OneNexus was built around this principle from the ground up. Our industrial agentic architecture separates orchestration from execution at the infrastructure level. Manager Agents enforce policy before any output leaves the system. Specialist Agents – covering Credit Analysis, ESG Assessment, KYC, and Trade Finance – produce deterministic, verifiable, traceable outputs.

Every decision carries a complete audit trail. Every deviation from approved behavior is visible in real time.

The results are measurable. Clients operating on OneNexus have achieved an 87% cost reduction in ESG portfolio analysis, 10x faster credit analysis, and 97% accuracy in data parsing for funds accounting – all with full regulatory traceability built in from the ground up, not bolted on after the fact.

Whether risk, credit, and engineering teams at your institution are tackling behavioral drift together – or not talking to each other about it at all – is worth finding out.

Because the institutions that solve this first will not just be more compliant. They will be faster, more trusted, and more defensible when regulators start asking the questions that current frameworks were never built to answer.

One question is worth bringing to your next leadership meeting:

When did your team last verify that your AI is still behaving within the bounds of its original approval – not in documentation, but in live production?

If the answer takes more than a few minutes to find, that is the gap.

Nexus FrontierTech builds regulated AI infrastructure for financial institutions. OneNexus delivers verifiable, auditable, production-grade AI – with traceability built in from the ground up. Trusted by global banks across Asia and EMEA.

See how OneNexus handles AI governance in production.

Level 39, One Canada Square,

Canary Wharf, London

E14 5AB

6 Battery Rd, #03-62,

The Work Project @ Six Battery Road

Singapore 049909

Studio 1006, Dreamplex Thai Ha, 10th floor 174 Thai Ha Str, Dong Da Ward

Hanoi 100000, Vietnam

Otemachi Building 4th Floor

Otemachi 1-6-1, Chiyoda

Tokyo 100-0004, Japan